Emotionally Adaptive AI Meditation Companion

Meditation apps play the same session for every user — regardless of how they feel right now. Aura changes that, adapting its guidance, pacing, and voice in real time based on emotional state.

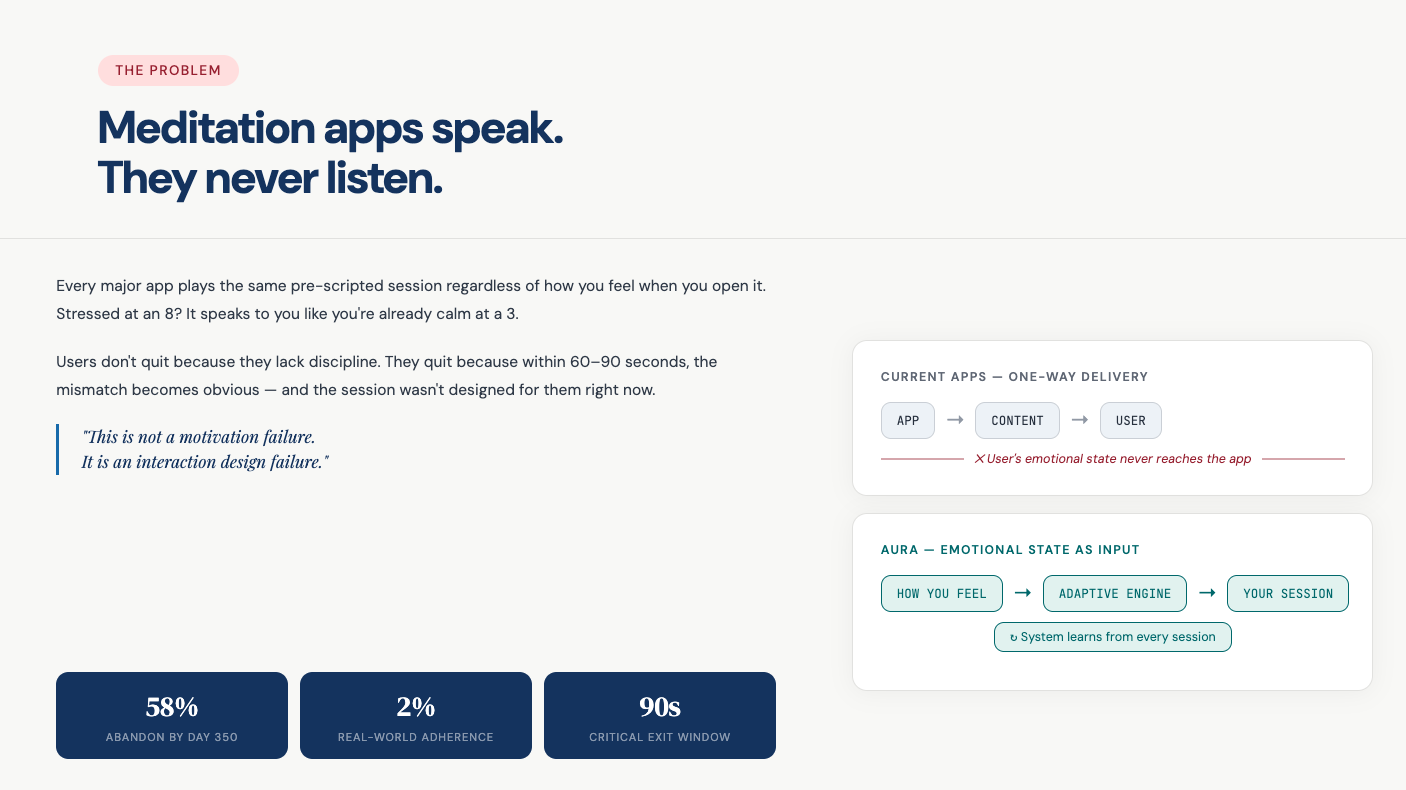

Every major app plays the same pre-scripted session regardless of how you feel when you open it. Users don't quit because they lack discipline — they quit because within 60–90 seconds, the mismatch becomes obvious.

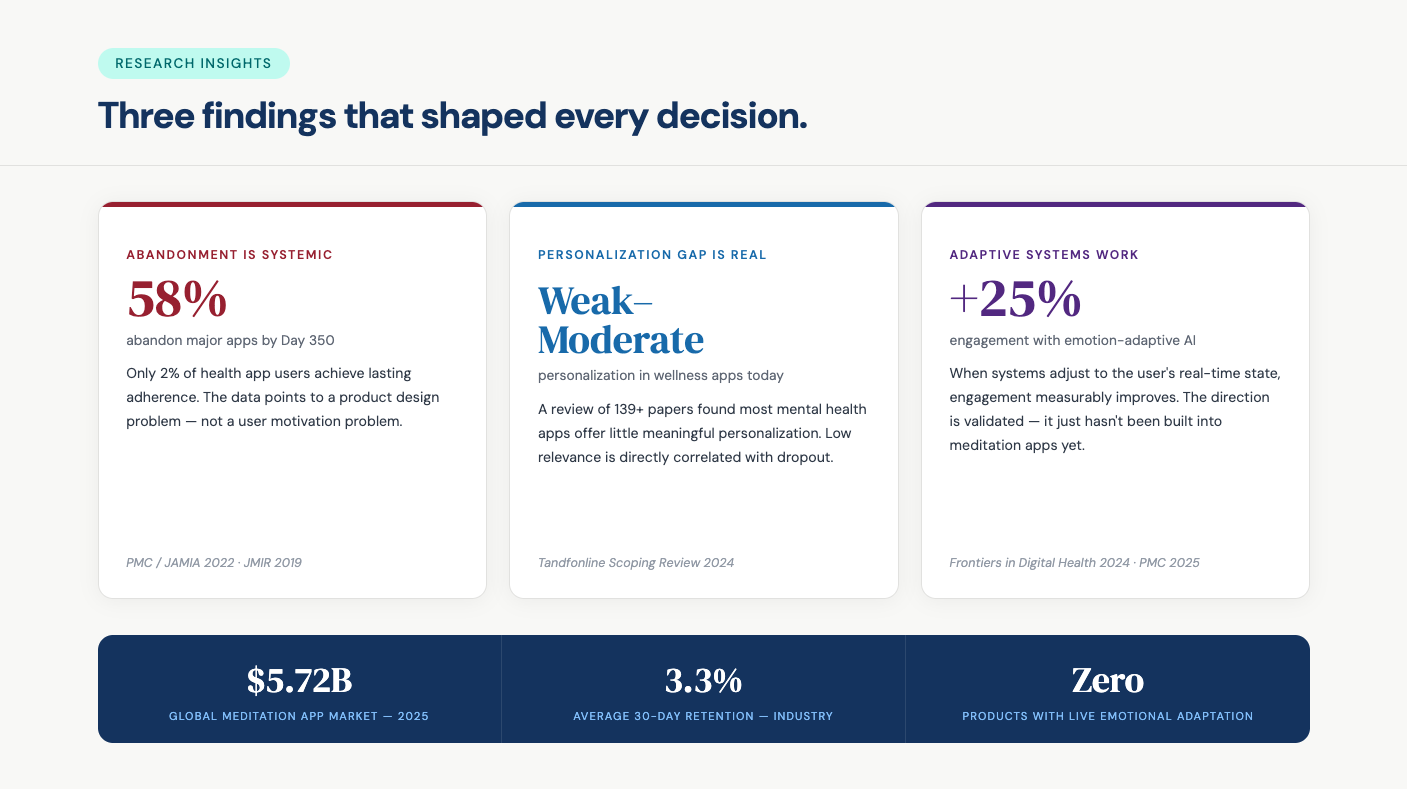

139+ studies. The same finding every time — when the system adapts to you, you stay.

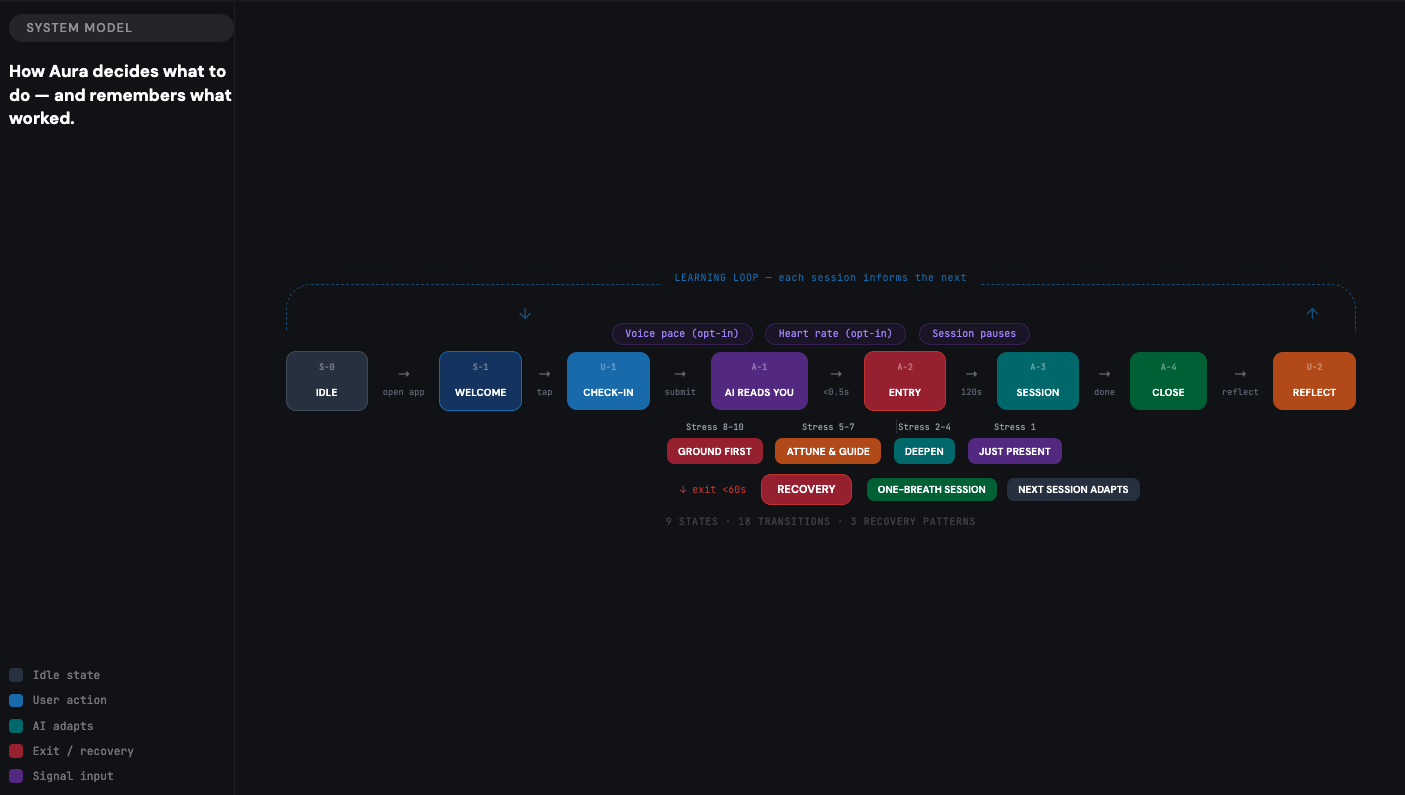

9 states. 18 transitions. 3 recovery patterns. A responsive interaction loop that adapts pacing, tone, and silence based on user activation level.

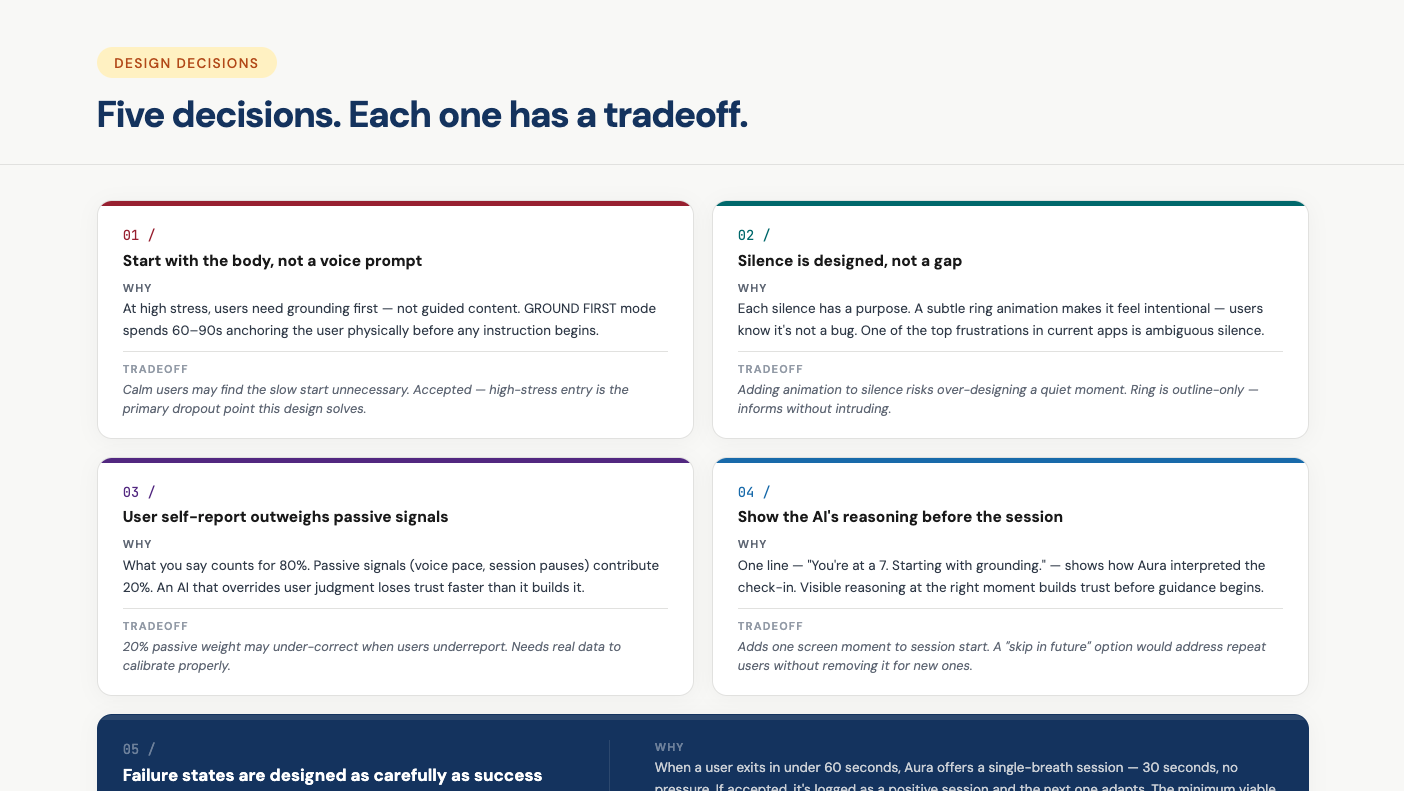

Design decisions prioritized emotional attunement, adaptive pacing, and failure recovery.

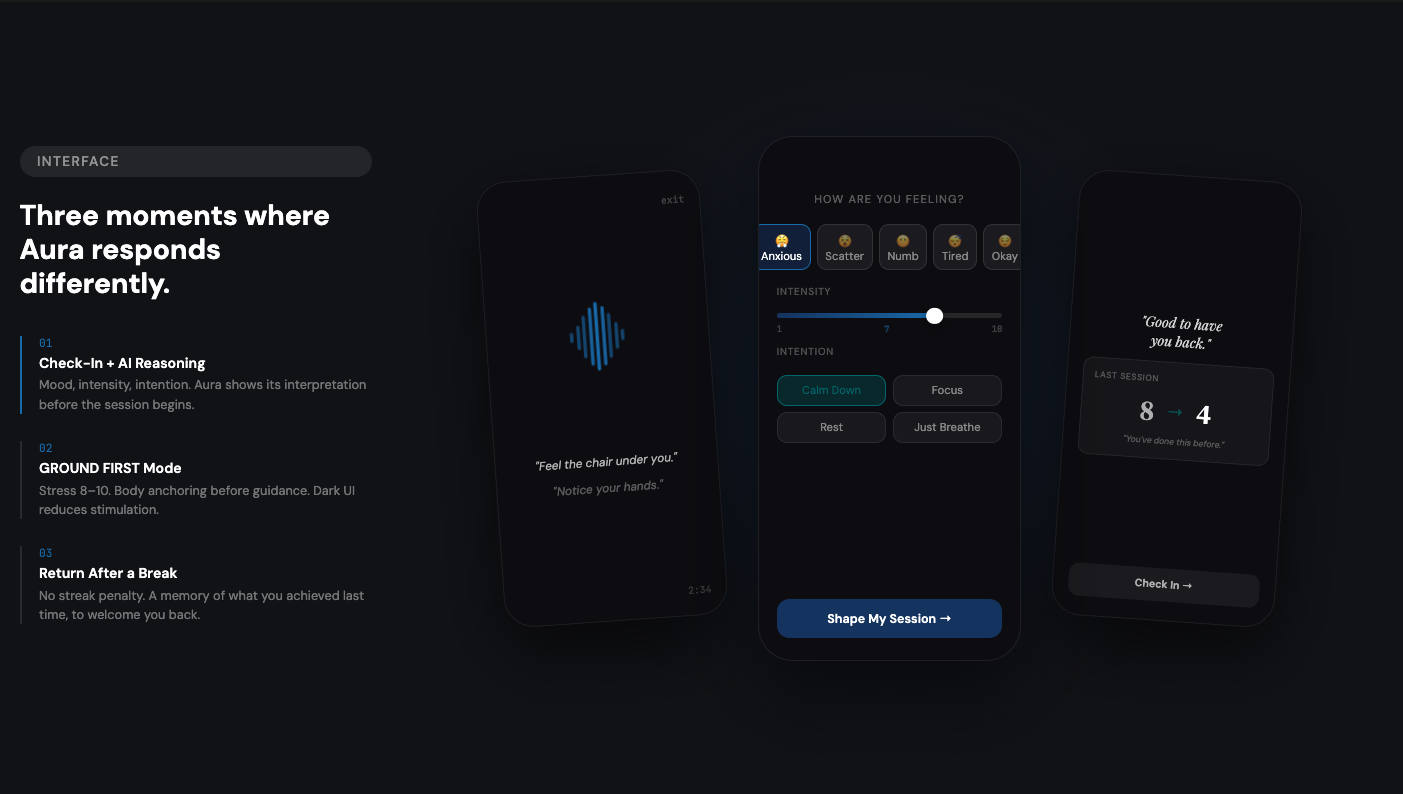

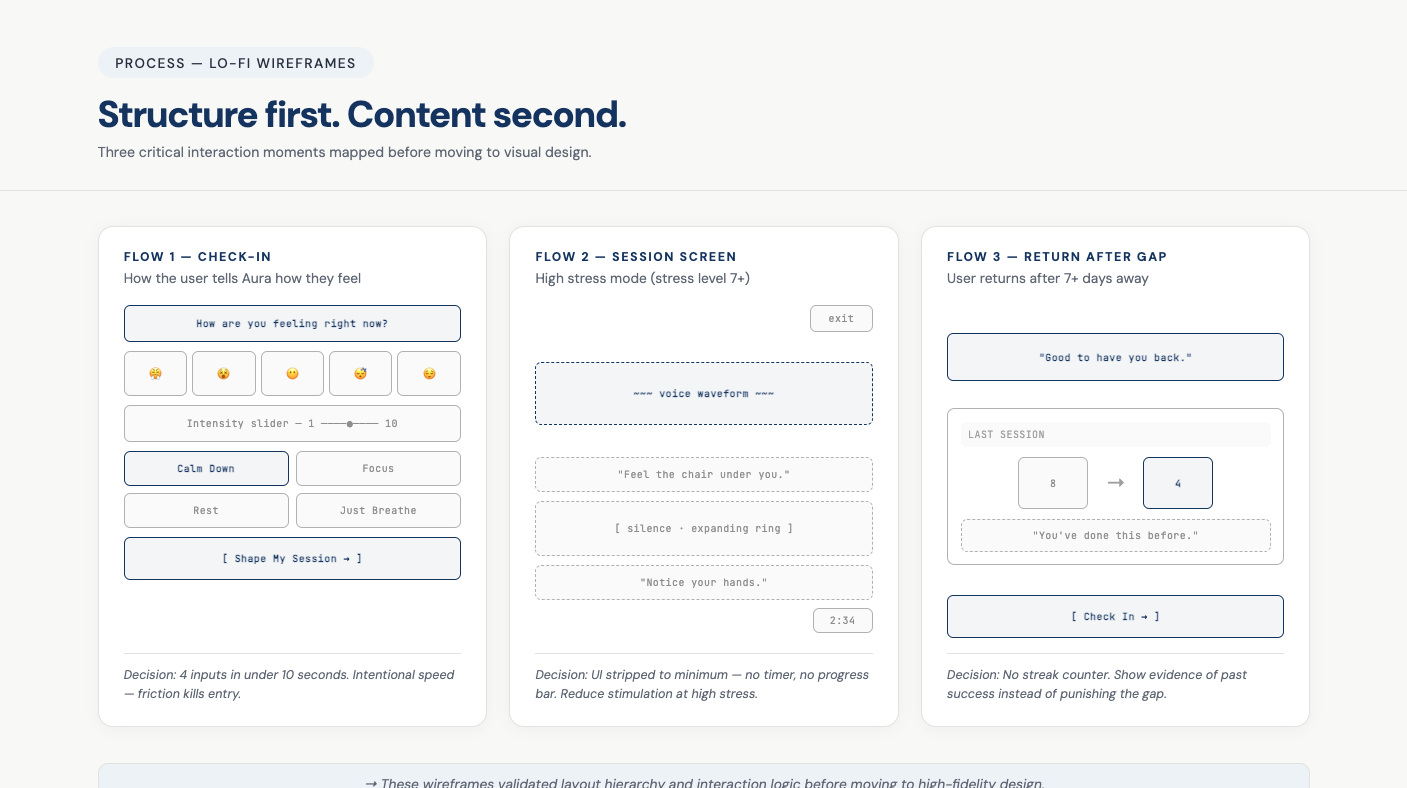

Three critical interaction moments mapped before moving to visual design.

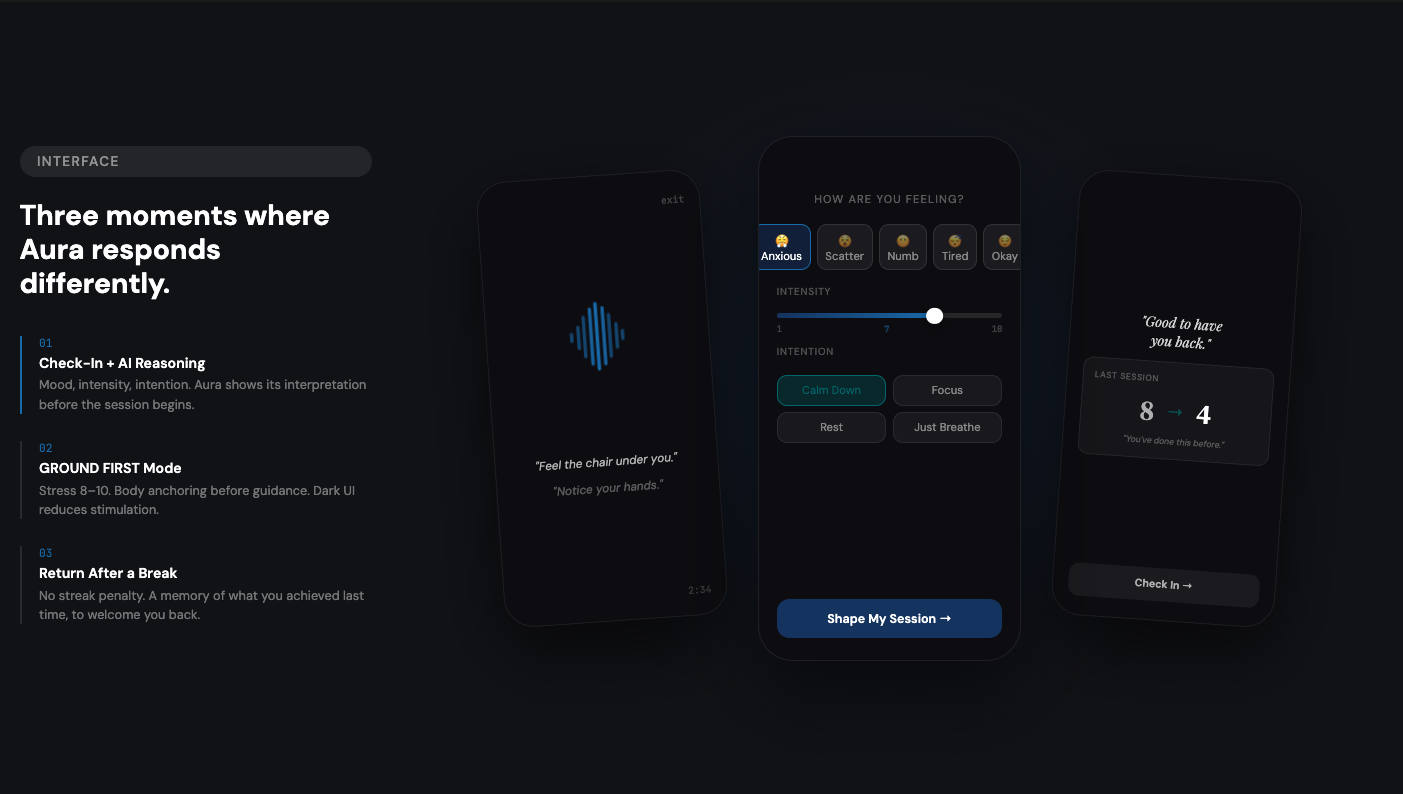

Check-in with AI reasoning. Ground-first mode for high stress. Gentle return after a break — no streak penalty.

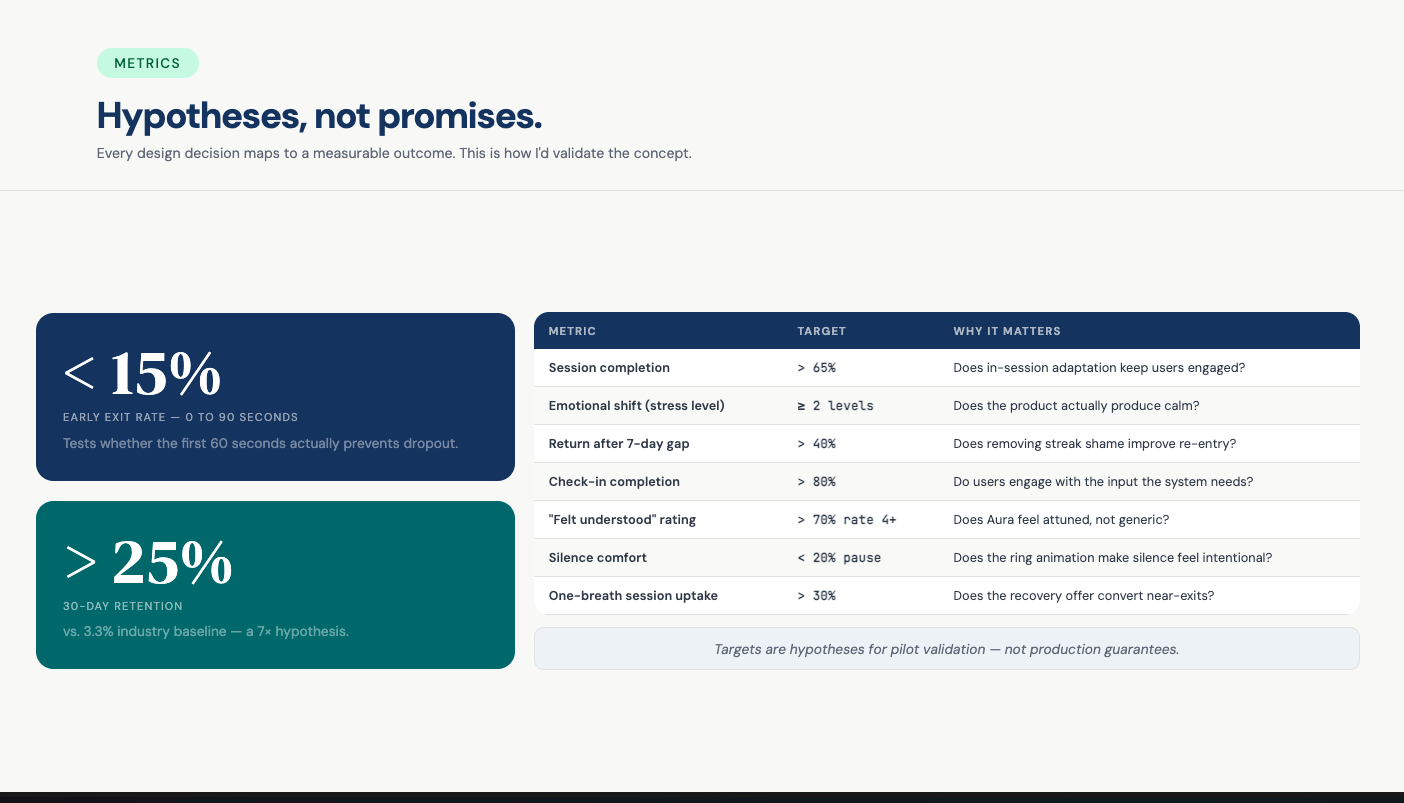

Every design decision maps to a measurable outcome. This is how I'd validate the concept.

This project explores the shift from static UX flows to adaptive AI interaction systems.

"The gap between visual designer and product designer is a thinking gap — not a tools gap."— Abiola, Verum Artifex Studios

Start with a discovery call or reach out directly.